A Fear of Tar Snakes

In which I confirm via thousands of critical-intervention-free miles that Tesla’s self-driving is wildly impressive, despite nitpicks

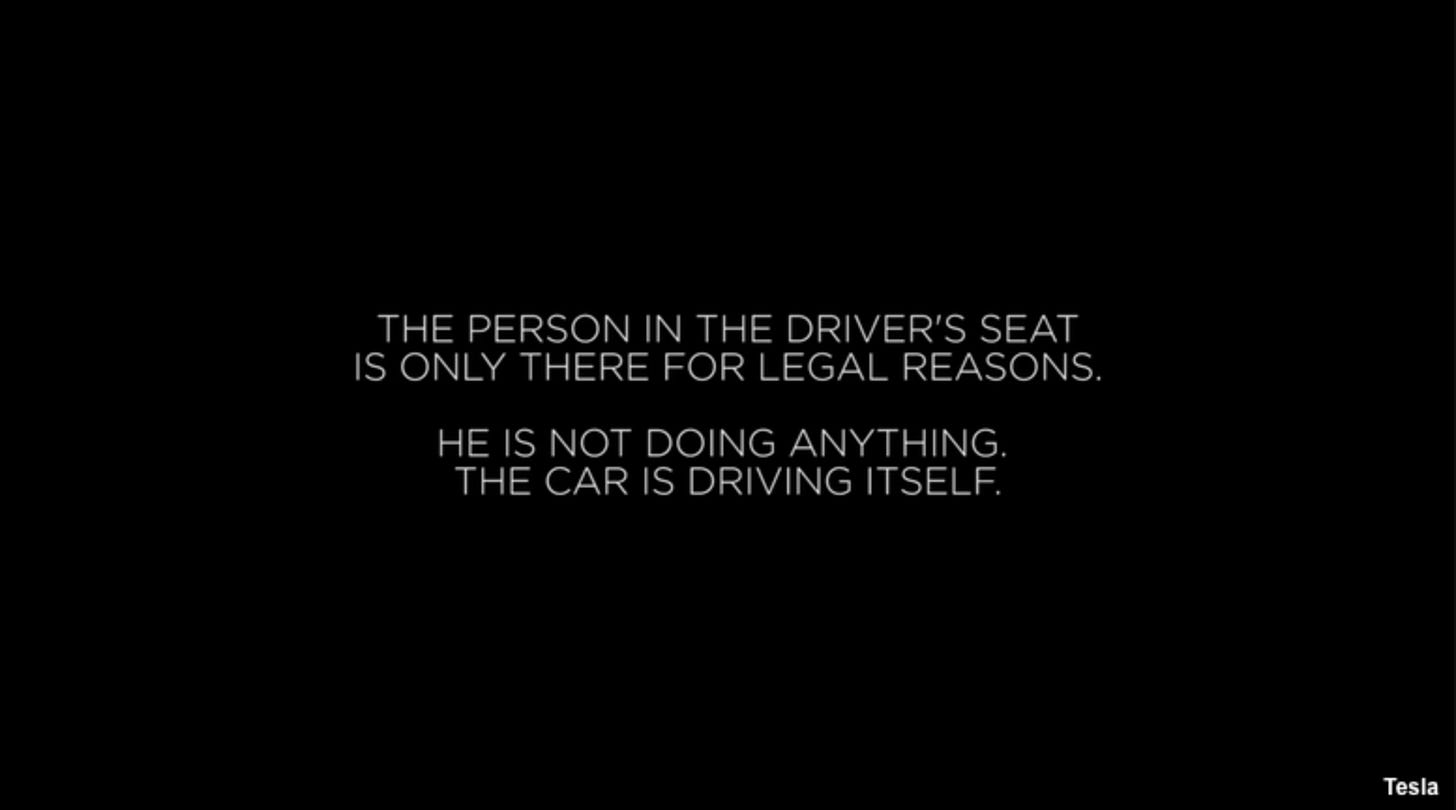

It’s been about 10 years since Tesla staged their ignominious “paint it black” video. Elon Musk emailed employees internally that he wanted “an amazing Autopilot demo drive” and since it was just a demo, it was “fine to hardcode some of it”. He assured employees that it was just to show what would be possible in the future. Then, at Musk’s insistence, Tesla released the video with what turned out to be a wildly deceptive splash screen:

Musk said the same on Twitter:

Tesla drives itself (no human input at all) thru urban streets to highway to streets, then finds a parking spot. When searching for parking, the car reads the signs to see if it is allowed to park there, which is why it skipped the disabled spot. 8 cameras, 12 ultrasonars and radar…

Finally, now, 10 years later, those things seem to be true. Minus the part about radar and ultrasonar. And possibly the part about reading signs. (More on that later.)

A couple years after that video and tweet, Apple engineer and Musk believer Walter Huang was playing a game on his phone when his Tesla drove into an embankment and killed him. In the ensuing wrongful death lawsuit, it came to light that the video showcasing Tesla’s supposed self-driving was spliced together from multiple takes, involved many disengagements, elaborate pre-mapping of the route, and one crash into a fence while parking (the part with an empty driver’s seat).

I keep not getting it through my head how willing Musk is to baldfacedly lie. Like all the knots I twisted myself into to interpret his characterization of the autonomous delivery in June 2025. I imagined him trying earnestly to spin it in the best possible light and accidentally conflating “no human input” with “unsupervised” (very different things, as I’ve been harping on endlessly). In retrospect it’s at least plausible that that demo was almost as fake as the 2016 video.

And yet.

As I mentioned last week, I rented a self-driving Tesla with the latest hardware and software to see for myself, driving from Portland, Oregon, to Colorado and back. Sure enough, well over 2000 miles (and counting — I’m typing this from the passenger seat as another family member yawns and looks around lazily from the driver’s seat) with zero safety-critical interventions.

It’s really uncanny how human-like it is. A striking example was when it needed to do a U-turn to get back on the freeway after a charging stop. It got its green arrow, started the U-turn, and then another car pulled out from the left and we found ourselves like the north-going Zax and south-going Zax. Just for a split second. Someone needed to yield and with us being halfway through the U-turn it made more sense for the other car to yield. Which it did, waving us on. I don’t know if it saw the other driver’s hand motion but throughout the maneuver the Tesla acted exactly the way I would’ve, down to the micro-motion.

Or when a pedestrian wasn’t yet waiting at a crosswalk but was juuuust close enough that I was unsure myself if it made sense to wait for them, you could feel the car hesitate, basically trying to decide what to do. In that case it decided to just go for it, which was fine, and I was ready to hit the brakes if it had seemed prudent.

Not that it never deviated from what I would’ve done. But pretty close. It even outdid me once. I thought it was glitching out when it seemed to spontaneously put on its blinker and started pulling onto the shoulder of a two-lane road. Turns out there was a truck approaching from behind, straight out of the Mad Max franchise, weaving around cars and crossing the double yellow line to pass people. So, yeah, good call, car. Or shame on me for paying insufficient attention (more on that later).

(And another point for the car later: it spotted and waited for a pedestrian who was waiting to cross but far enough from the curb that I would’ve likely missed them myself.)

It’s almost frustrating how impeccable it’s been. Had there been even a single blatant error, we’d have definitively settled the question of whether this is level-4 capable. But, to re-beat this drum, a couple thousand miles without incident is only weak Bayesian evidence that this car will kill you less often than a human driver would, namely every 100-ish million miles. (Waymos, by contrast, we absolutely know are superhumanly safe, with their 200 million empty-driver’s-seat miles and zero1 fatalities.)

Still, seeing is believing, and I absolutely have become more inclined to believe that Tesla really has pulled this off. There seems to be almost nothing it can’t handle. I mentioned pedestrians, aggressively reckless drivers, and polite deferential drivers. Also parking, including parallel parking, merging, waiting for traffic lights, you name it. At four-way stops it seems to expertly intuit the intent of other drivers and confidently signal its own intent, following the turn-taking protocols or adjusting when other drivers violate them. It even handles treacherous mountain gravel roads:

It ever-so-slightly bottomed out once, but no worse than I would’ve. It knew exactly what speed made sense for that barely-a-road.

Traffic circles were also no problem. It did exit one at the wrong place once — presumably a navigation error (more on those below).

We did see one instance of the car freaking out and asking the human to take over, but this was again impeccably correct. It tried to use the wiper fluid while leaving a parking lot (that’s a thing it spontaneously does sometimes) and with sub-freezing temperature and a lot of dead bugs it made a mess of the windshield and suddenly neither we nor it could see. So we hit the brakes, but all indications are that it too was coming to a stop until the windshield situation was sorted. Nor was there any actual danger regardless, at that speed.

Complaints and Quibbles

Ok, enough well-earned praise. I’ve been gushing about how human-like it is, but it takes this too far in some ways. For example, it doesn’t respect a 2-second following distance. There’s not even a setting for that. Putting it in Chill Mode or even Sloth Mode doesn’t help. And putting it in Sloth Mode is the only way to get it to follow the speed limit.2 And even then, the car seems mostly oblivious to speed limit signs, generally going with whatever the map data tells it the speed limit should be. On the plus side, it follows the flow of traffic well.

(Funny story: There’s a handy physical dial on the steering wheel for choosing the speed profile. Besides Sloth and Chill there’s Standard, Hurry, and Mad Max. Leaving Oregon, with the highway devoid of other cars, of course I experimented with dialing it all the way to Mad Max. The car crept up to about 85 mph when the speed limit was 70 or 75 mph and, sure enough, we got pulled over. I resisted the urge to blame the car and got let off with a warning.)

And actually, it might be slightly worse than merely oblivious to the speed limit signs. Occasionally it sees a sign indicating minimum speed or the speed limit for trucks and takes that to be the speed limit. Bumping it up from Sloth Mode is the only way to get it to disregard its mistaken impression of the speed limit.

The car gets minor demerits for taking longer than necessary to realize when a lane is turn-only. In one case it was oblivious to signage and kinda swerved into and immediately back out of a turn lane. Perfectly human mistakes though. And one time we thought it was confused when it used its blinker to initiate a pass when there was seemingly no room to do so. But just then a passing lane appeared, so presumably it knew about that from map data.

Another minor demerit for hanging out in another car’s blind spot for a couple minutes. But just one time. Mostly it seems astute about relative positioning and was overall on par with typical human driving behavior in this regard.

In general it seems so uncannily human-like that I have to wonder if, in a real emergency, it would wait the standard ~200 milliseconds of human reaction time before braking, just to impeccably match what a human would do. (I don’t actually think it would, but everything else seems so tuned to human driving behavior, I have to wonder.)

The biggest potential safety issue we saw was imperfect understanding of static road hazards. Multiple times it dodged tar snakes. (Do non-cyclists even know what these are? They’re strings of tar used to fill in and smooth out cracks in the road — not something car drivers ever need to think about.) One time, mid-swerve, the Tesla put on its blinker and changed lanes as if it was saying “I meant to do that.”

It does, for the most part, deftly avoid road debris. But one time it managed to swerve into a tire fragment dead center in the lane that would’ve passed harmlessly between the wheels of the car. Hitting it was also harmless, to be clear. But clearly the car didn’t know that, else it wouldn’t have swerved in the first place.

There’ve been some other instances of phantom dodging. Like for random patches of differently colored pavement that, to human eyes, were unambiguously fine to drive over.

The worst was a failure to react to some dirt clods falling off a truck that visibly looked like they could’ve been bouncing rocks. They turned out not to be a safety hazard but actually might’ve done very, very slight damage to the car, I’m not sure. Also, ahem, proper following distance could’ve avoided that. But given the situation I guess I didn’t have time to react either, so it’s unclear how much blame the car deserves there.

It’s actually possible I was insufficiently attentive at that point because the car is otherwise so good that I’m constantly lulled into complacency about supervising it. The car is shockingly permissive about this too. There’s an in-cabin camera watching to confirm the person in the driver’s seat is watching the road. But, from our careful testing, it can sometimes, depending on where exactly you’re looking, allow minutes-long lapses of attention.

If it really is superhumanly safe, that’d be ok. I sure wish we knew if it is.

Safety aside, navigation was hit-or-miss. A couple times it took a completely wrong turn, requiring a non-critical disengagement to turn the car around. But ultimately that was rare enough to merely count as a quibble. We just kept Google Maps navigation on one person’s phone as a sanity check.

PS: Electric cars are amazing

Not touching the steering wheel or pedals for 2400 miles of road-tripping was pretty mind-blowing. This was also my first road trip in an electric car and I was highly impressed by that as well. It’s genuinely no less convenient than gas cars and the cost of charging is about half the cost of gas. At least on this 2026 Tesla, the charging is insanely fast, especially when the battery is low, so you can charge for 10 minutes or so and get another couple hours of driving. And the superchargers are absolutely everywhere. It even picks the stops for you, and of course parks itself in the spot for charging.

So, yeah, I hate to support Elon Musk in any way since he seemingly went literally insane a couple years ago, but this is so insanely impressive I can’t help it. Presumably other car companies will catch up soon (I guess in a couple years, optimistically, you’ll be able to buy a Toyota with Waymo tech). But I don’t think soon enough that if you’re buying a car today it should be anything but a Tesla.

I’ve tried extremely hard to disbelieve this, but here we are.

UPDATES

I continued to drive supervise the Tesla driving around Portland until it was time to return it. Still no safety-critical interventions but it did ignore a fully unambiguous “no turn on red” sign. I let it do it, for science, and it did make the turn safely enough, despite the illegality. It cemented my assessment that the car’s understanding of signage in general is abysmal. Perhaps this matters less for the robotaxi program operating in fixed, geo-fenced areas. That’s a limitation Musk has mocked Waymo for but I believe Waymo’s system is proving more generalizable to new domains than Tesla’s.

Yet more evidence of the car’s illiteracy: it got itself stuck in a parking garage, unable to follow the exit signs. It seemed to be stuck in a loop where it drove all the way to the bottom, carefully turned itself around (very impressively, to be clear), and then drove all the way to the top, where the whole cycle repeated until we (non-safety-critically) intervened.

See the previous AGI Friday in which I discussed a couple multi-vehicle crashes that technically included Waymos and fatalities but in both cases the Waymo was so clearly not at fault that it’s fair to call it zero deaths from Waymos.

“That’s the most Elon Musk thing I’ve ever heard,” comments my friend Christopher.

Your experience for road trips mostly matches mine (2023 Model Y, HW4) but on local trips in the Midwest I’d estimate I make at least one safety critical disengagement a month (under a thousand miles). The frustrating thing is that it’s difficult to mentally model where it struggles, and there are often behavioral regressions between releases. Maybe this is what it’s like to be a driver's ed instructor, constantly being driven around by a young driver with different strengths and weaknesses

The past month has been particularly bad, where it’s struggled with timidity and hallucinations. Some recent disengagements I remember:

- It started to brake and pull over twice due to flashing (nonemergency) lights on the other side of a stroad

- Yesterday it had a green right turn but braked harshly when an opposing car started initiating their unprotected left, infuriating the car behind us (then it randomly activated and cancelled its turn signal twice trying to decide what freeway lane was correct, and the same car following us really got road rage)

- Today it braked harshly in a roundabout when another car was safely starting to creep in

Those were split between my wife and I driving, and neither of us is able to react quickly enough to cancel the braking, which was unwarranted and confusing. Fortunately no one has been tailgating us during these events and the worst damage FSD has caused us was just curbing a wheel while parking

Overall Teslas and FSD is quite impressive and I love having it, but it’s too easy to get a false sense of security, and it’s plausible to me that it could require *more* driving experience to use FSD safely than it does to just drive the Tesla normally

Yeah, I won't be considering buying a Tesla until Elon is 100% out of the company.