Levels of Autonomy

It's not just for self-driving cars anymore

(Thanks to Charlie Sanders for cogent pushback in the comments of last week’s post. I’ve posted my reply with more reasons I don’t think we can reason our way to sanguinity on superintelligence. Debunking arguments for near certain doom, sure, I’m all about that. Just that people persist in conflating that with “so things will probably work out”. And all I’m saying is “probably” doesn’t cut it. Ok, on to this week’s topic!)

I was impressed enough with Charlie Sanders’s comment to check out their Substack and found an excellent post on AI writing: “We Need to Be Able to Talk About AI Use”.

Then my friend Christopher Moravec posted “The Levels of AI-Assisted Software Development” over on Almost Entirely Human.

Both of those reminded me of the official autonomy levels for self-driving cars. So I’d like to propose a nice generalization for applying such a hierarchy to any previously human task AI can now help with. Let’s start with a review of the well-established car version that we can take as a template:

Level 0 is an old-school car, no AI at all

Level 1 is cruise control, lane-keeping, and similar driving assistance

Level 2 is self-driving with the human ready to yank back control if the car screws up

Level 3 means the human is ready to take control in real time if the car beeps

Level 4 means no human in the driver’s seat, the car safely stops and asks humans for help if confused (think Waymo)

Level 5 is the AGI of self-driving, with no human input needed ever (other than saying where to go, I suppose)

Taking heavy inspiration from Sanders, here’s a version for writing:

Level 0 is old-school writing

Level 1 is spellcheck, grammar check, LLM thesauri, and similar tools

Level 2 means AI offers outlines and ideas but the writing stays fully in the human’s voice

Level 3 means a human curating AI-generated prose

Level 4 means the human merely prompts the AI, with no further input

Level 5 is the AGI of writing, with no human input needed at all

Notice that I’ve shifted everything so level 0 is still the all-human one and added level 5 for full AGI. Now let’s do the same with Moravec’s levels of automated coding:

Level 0 is old-school coding (compilers allowed)

Level 1 is fancy autocomplete that may save tons of keystrokes but the human vets every line

Level 2 is a chatbot integrated into a development environment that contributes arbitrary amounts of code in collaboration with the human

Level 3 means the human ditches the development environment and just tells the AI in English what to implement and how

Level 4 is pure vibe-coding, or agentic engineering, as Christopher prefers to call it, where you just prompt the AI with what software you want and abdicate all architectural decisions

Level 5 is AGI where the AI doesn’t even need to be prompted

By level 4 the human is a product manager rather than a developer. At level 5 the whole concept of software dissolves. Or rather software materializes as needed for end users with no other humans ever stepping in. But that’s still years (plural! almost certainly plural) in the future.

I think these hierarchies are hugely helpful for the general discourse and being specific about what you’re in favor of or against. Here’s my generalization from all three of the above instances (driving, writing, coding):

Level 0: No assistance

Level 1: Tool assistance

Level 2: Human fully in charge and responsible at all times

Level 3: Human as final authority

Level 4: Human only provides input when needed

Level 5: No human

Or, extra succinctly:

No AI (All Human)

Tool AI

Assistant AI

Directed AI

Autonomous AI

AGI (No Human)

As regular readers know, I’m a huge fan of level 4 self-driving cars. Namely, Waymo. I’m still holding my breath on whether Tesla is about to hit level 4 with only cameras.(Maybe I’m taking some cheat breaths now and then.)

If you’re a computer programmer and not at least experimenting with levels 3 and 4, you are missing out. If you’re resisting even level 2, you’re really missing out. See last week’s post for what this means for recursive self-improvement of AI. I’m in awe of what Christopher can do at level 4 and am not exactly there yet myself but I’m trying.

For writing though, I’m practically a Luddite. I vehemently oppose going past level 2 for writing. As a reader I recoil from writing that’s remotely redolent of robots.1 My red line for still counting as level 2 writing is that you can’t ever let an LLM put words in your mouth. If an LLM comes up with something sufficiently good you can quote it explicitly. In other words, I’m drawing the line at the same place you would for plagiarism. Not that I’m suggesting the ethical line is the same. Just in terms of what I think the writing norms should be and what I want as a reader. See also my previous post on AI writing.

Random Roundup

AI haters should like the AI math update from MathArena suggesting that the top AI models, if you ask them to prove a theorem that’s false, will obligingly bullshit their way through a bogus proof. But they’re doing it steadily less and if you’re pinning your “AI is a sham” hopes on math, hoo-boy, I don’t know what to tell you. Maybe sample some other parts of MathArena.ai. I just learned that the top models can solve close to 90% of [recent] Project Euler problems for a total token cost of under $100. [UPDATE: They evaluated “20+” recent Project Euler problems, not all 900+ as I originally wrote.] There exist humans that can top that but I sure am not one of them. I’m up to 133 problems and I started in, um, 2007.

The closest I get to level 4 autonomy for programming is one-off apps. Like just yesterday we had a big family discussion (thanks also to Serine Molecule) about the physics of vision and I had Claude make a slick demo with basically a single prompt.2 If this kind of thing were all that AI could do it would already be beyond flabbergasting and have pretty wild implications for the future. It’s such a powerful tool for learning, for starters. (And, as I think Zvi Mowshowitz originally put it, an equally powerful tool for avoiding learning.)

I like the new Pro-Human AI Declaration and am impressed by how much common ground it finds across the whole spectrum of opinion, from the “stochastic parrot” pooh-poohers to the die-hard doomers and the masses of more reasonable people in between. Here’s a fun video with a run-down of it:

Disclosure: I ran that sentence by the golems when it was “as a reader I’m allergic to text that’s remotely redolent of robots” and asked for ideas for turning the alliteration up to eleven and Claude thought of “recoil”. The rest was all me, I swear it.

Here’s the entirety of what I said to Claude, in a fresh window, to get that app:

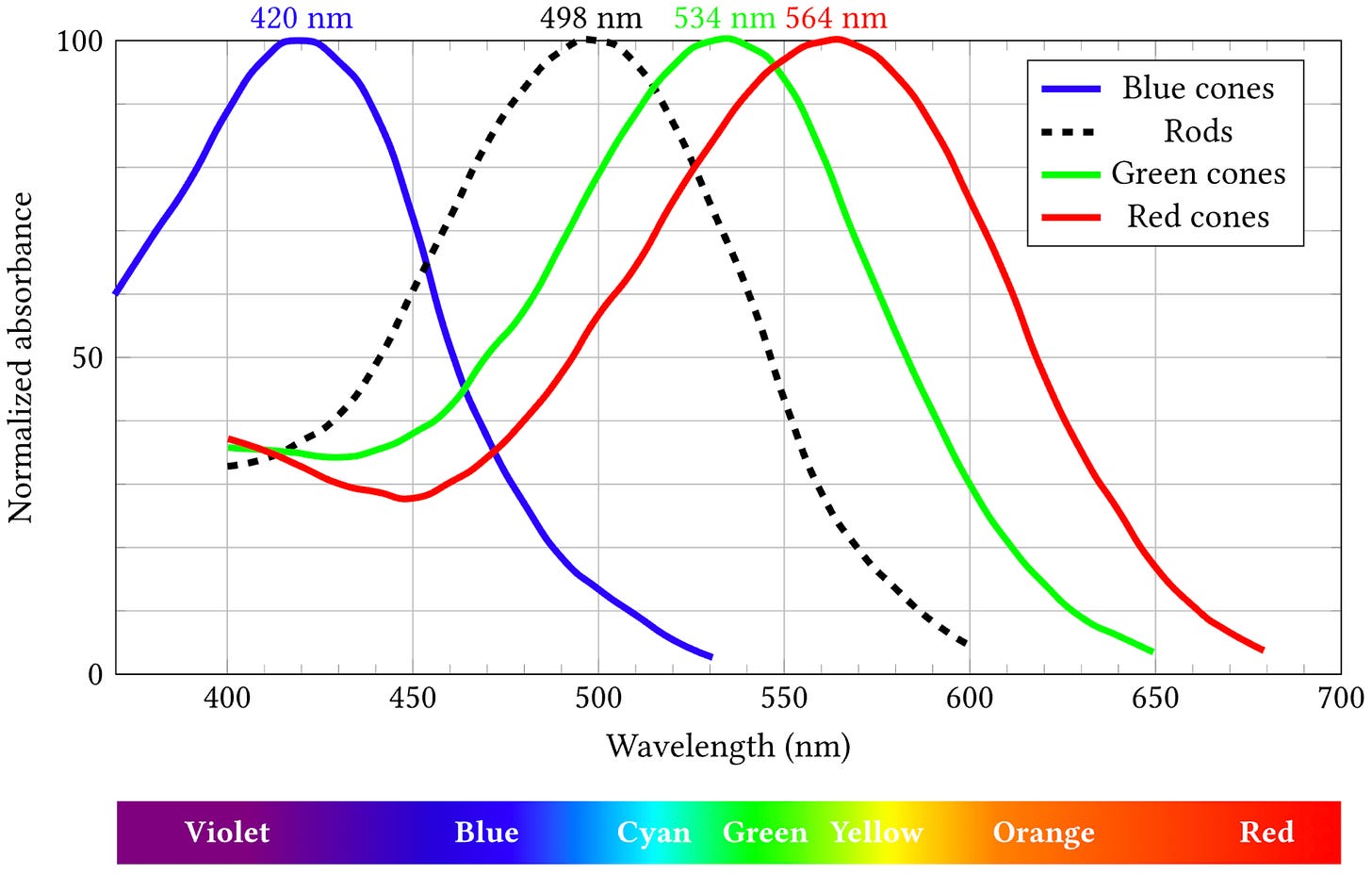

Sanity check: I guess fundamentally your experience of a color (a distribution over wavelengths) is reducible to an ordered triple giving the activation levels of your 3 cone types.

Can you make an interactive demo for getting intuition about questions like the following:

Can we construct two very different physical colors (distributions of wavelengths) that yield the same ordered triple? I’m guessing something like the following would do it:

(a) pure white light, a uniform mix of all wavelengths

(b) a mix of just 3 wavelengths, one at each cone’s peak

That yielded a working app almost as it is now. I just had one followup:

couple questions: what’s S/M/L stand for? and could we default to the blue metamer and have it be an even closer match, just to illustrate the point that very different looking distributions of actual light can yield the same percepts?

I’m a bit of an LLM skeptic for real-world applications, but I have to say Claude building that color app from a single prompt was extremely impressive.

(I can’t remember if it was you or ACX who was pondering the question of why there’s such a mismatch in different people’s attitudes to AI, and I agree that it has a lot to do with coding. If you only use LLMs for help with real-world tasks, and if those tasks are niche enough that you couldn’t just get the same answer by googling, then LLM performance still lags quite a bit behind the hype.)

PS: Let me clarify a key distinction between level 2 and level 3 autonomy.

In the official levels of self-driving, both levels 2 and 3 require you, the human, to be ready to take control in real time. The difference is that at level 2 it's your responsibility to decide when to take control. You're supervising and it's up to you to disengage the AI if it's about to screw up. At level 3 you no longer have to supervise everything it does. You have to be ready to take over at any moment but the AI will get your attention if it needs you. You can read a book or otherwise do your own thing much of the time.

I advocate maintaining that distinction to whatever else we're applying these autonomy levels to.

Writing: Level 2 means you're considering every word the AI generates and using that word if and only if it's what you endorse saying, in your own voice. (Better yet, the plagiarism litmus test: don't use anyone's or anything's exact words without explicitly quoting them.) At level 3 you're still the one in charge and should read every word before publishing since you're vouching for the finished product, but you're not supervising the writing word by word as it's written.

Coding: Level 2 means you're in the integrated development environment (IDE) with all the code. At level 3 you ditch the IDE and just talk to the AI in English. You don't worry about the literal code but you're involved in implementation decisions. At least some of them.

In short, level 2 means the AI is assisting you and level 3 means you're directing the AI.