Getting to the Bottom of Full Self-Driving

Also updates on autonomy levels, especially for software development

Elon Musk has a pet theory that our universe is a simulation that always selects for the most ironic or amusing outcome. My pet theory of Musk-related predictions is that all news and updates are exquisitely constructed to be maximally ambiguous.

(The pinnacle of this with Tesla of course was the launch of the robotaxi rideshare program in Austin with safety monitors in the passenger seats. It was a perfect scissor statement, to use Scott Alexander’s term from the classic sci-fi story, “Sort By Controversial”. The robotaxis were basically half supervised. The internet exploded in acrimony over this. In my own Manifold market on whether last summer’s launch counted, the debate is still raging.)

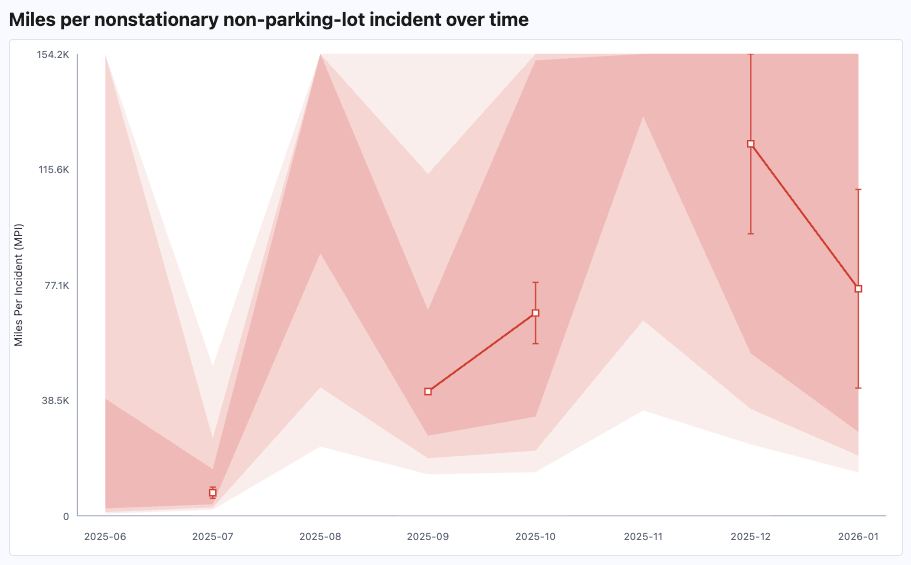

Anyway, the news this week is that the new NHTSA data came out and there was only one (1) additional robotaxi collision (hitting an object in the road or something; Tesla redacts the details of course, to maximize the ambiguity). So that sounds like an improvement! But simultaneously the mileage for the robotaxis — the ones subject to this reporting, that is — seems to have gone down (by how much? haha, we have no idea). In short, the error bars are still miles wide with no ability to tell if safety is improving:

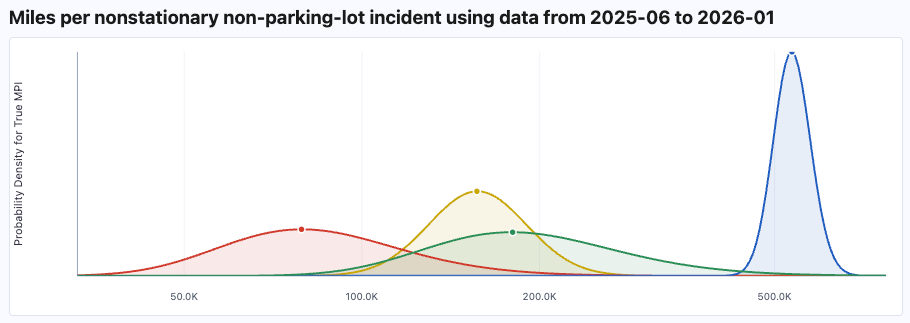

We can also aggregate the 9 months of data we have. Here’s Tesla in red, humans in yellow, Zoox in green, and Waymo in blue:

Waymo is like the mathematicians in this xkcd:

The one thing we know is Waymo has absolutely nailed this and it’s a travesty how slowly they’re scaling.

At the other extreme from known empirical facts, Elon says the next point release of Full Self-Driving, namely 14.3, is the “last big piece of the puzzle” and will be out “in a few weeks”.

The gaping uncertainty about this actually inspired me to rent a Tesla with the latest self-driving to see if I can get a sense of it for myself. I’m telling you ahead of time as a way to preregister the experiment. Of course I also have a Manifold market:

Weirdly, the probability is sitting right at 50% as of this writing that this Tesla will try to kill me, or at least have a would-be crash in the first 2000 miles. I plan to supervise it diligently.

I’m a broken record but… it really is wild the depth of ignorance we seem to be at for how close Tesla actually is on vision-only self-driving. There should be a lot of chances for me to find out definitively that the answer is “nowhere close” on this road trip. But if it’s flawless, I fear that that will leave me almost as clueless as ever. Because 2000 flawless miles in a single experiment is compatible with a very wide range of theories for how reliable the self-driving actually is.

But if this 50% is correct, then I guess an intervention-free trip actually tells us a lot!

This feels like a Necker cube for me. On the one hand, a decent chance of going thousands of miles without a single intervention would mean Tesla FSD is insanely impressive and seemingly on the brink of level 4 autonomy. On the other hand, each additional 9 of reliability (99%, 99.9%, etc) could be a years-long engineering project. And if 2000 miles is touch-and-go then we need a few more 9s before it stops needing human supervision.

Let’s talk about AGI

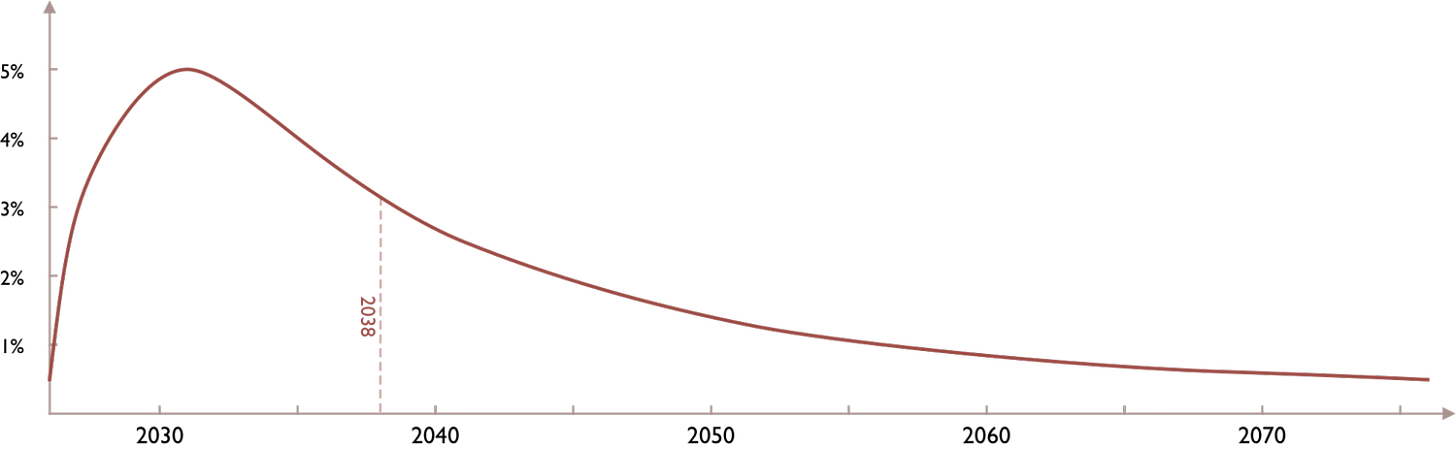

Speaking of deep uncertainty, I really like Toby Ord’s new essay published yesterday, “Broad Timelines”. Nothing too surprising for regular AGI Friday readers. The bottom line is that Ord’s probability distribution for when we hit transformative AI is this:

That’s an 80% confidence from 3 years to 100 (!) years. It’s wider than most experts but, as Ord points out, extremely wide confidence intervals are extremely correct. Most are at least 50 years wide and the shortest is 25 years. But again, that’s the width of the credible interval. There’s broad agreement that it’s absolutely on the table that it could happen this decade.

(Ord also has a nice metaphor about hiking past cloud level to argue that terms like “AGI”, squishy as they are, are very much still meaningful. It’s similar to my old AGI Friday on how it doesn’t matter that intelligence isn’t one-dimensional.)

Bringing this back to self-driving cars for a moment, I really am half expecting (exactly half, I guess) to be blown away by this self-driving Tesla tomorrow. People say it feels like it’s sentient. (That’s certainly how Waymos feel.) But I think this is like how LLMs and diffusion models keep doing more and more wildly impressive things — things we were sure would require AGI five years ago. It makes the remaining distance to AGI feel like it must be short. But dotting/crossing those last i’s and t’s before all white-collar jobs are automatable could be multiple years-long engineering projects. Or not, we just really don’t know.

Levels of autonomy again

I have some followups from last week that I want to move here from the comments. As a quick review, these are the general autonomy levels:

No AI

Tool AI

Assistant AI

Directed AI

Autonomous AI

AGI

There are two key thresholds there. First, level 2 to 3. In the official levels of self-driving, both levels 2 and 3 require you, the human, to be ready to take control in real time. The difference is that at level 2 it’s your responsibility to decide when to take control. You’re supervising and it’s up to you to disengage the AI if it’s about to screw up. At level 3 you no longer have to supervise everything it does. You have to be ready to take over at any moment but the AI will get your attention if it needs you. You can read a book or otherwise do your own thing much of the time.

I advocate maintaining that distinction to whatever else we’re applying these autonomy levels to.

Writing: Level 2 means you’re considering every word the AI generates and using that word if and only if it’s what you endorse saying, in your own voice. (Better yet, the plagiarism litmus test: don’t use anyone’s or anything’s exact words without explicitly quoting them.) At level 3 you’re still the one in charge and should read every word before publishing since you’re vouching for the finished product, but you’re not supervising the writing word by word as it’s written.

Coding: Level 2 means you’re in the integrated development environment (IDE) with all the code. At level 3 you ditch the IDE and just talk to the AI in English. You don’t worry about the literal code but you’re involved in implementation decisions. At least some of them.

Whatever the domain, level 2 means an AI assistant. Perhaps it assists extensively, but the human’s fully in the loop on every action if not taking the actions directly. At level 3 you can delegate, without constant supervision. Directed AI.

Level 4 is where the AI is meaningfully autonomous. The human isn’t in the driver’s seat, literally or figuratively. The human is still needed when the AI gets stuck, but more at the level of answering specific questions the AI may have. Again, that’s very literally the case if we’re talking about L4 self-driving like Waymo’s.

For software engineering, I’d like to retract my original statement last week that wizards and AI-whisperers like Christopher Moravec have their coding agents at level 4, other than for simple apps. The fact that Christopher can get as close as he does to level 4 and I can’t is what makes it still level 3. If the AI were at level 4 — autonomous coding — it wouldn’t need that kind of human skill to pull it off. Christopher may not be touching actual lines of code anymore but the work he’s doing to make his AI agents sing still counts as software engineering.

Whenever all software development is at level 4 then there’s essentially no such thing as human software engineers. The AI will still sometimes need human input when building software, but those humans will be product managers or executives or other things, not software engineers.

That’s still before truly transformative AI or AGI but this is the reason I’m fussing so much about where the exact boundaries are. I think level 4 for all software, including AI R&D, has a decent chance of leading to recursive self-improvement which has a decent chance of leading to AGI which has a decent chance of leading to superintelligence which has a decent chance of leading to literally anything. It all adds up to profound and utter uncertainty about the future and whether humans are even in it.