Explain Like I'm 5: What's a Technological Singularity?

Also is Claude Mythos superhuman? Answer: not in the singularity-triggering way, no.

The idea of a technological singularity is by analogy to various runaway feedback loops. A black hole is a classic example: the greater the density of an object the greater the gravity, which further squishes the object aka increases the density which increases the gravity which... spirals in on itself, so to speak, until not even light can escape.

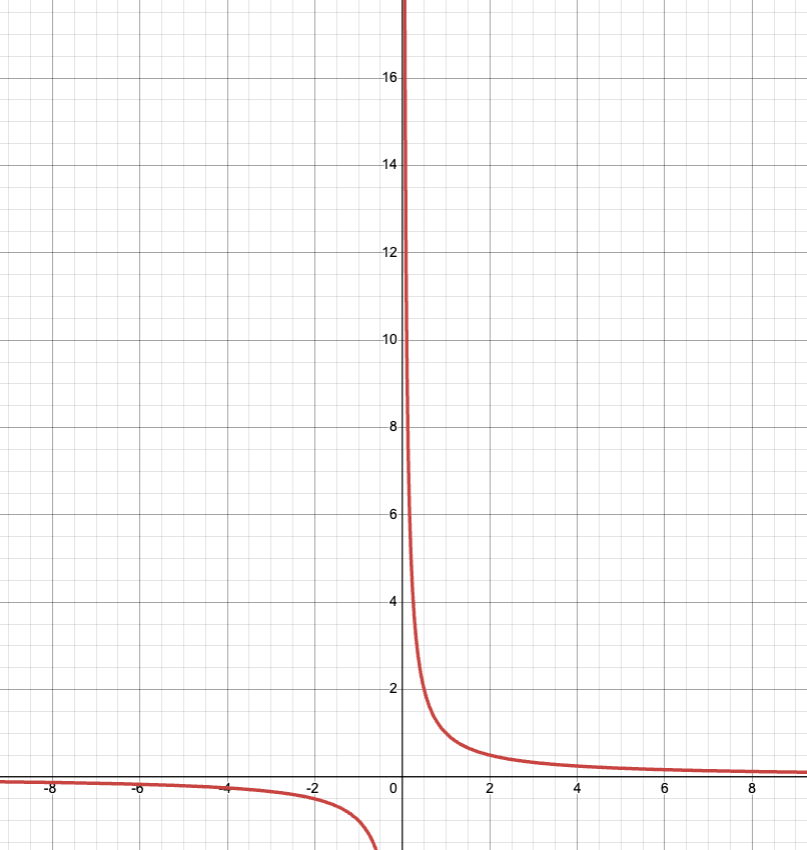

Or take the math definition of a singularity which includes such examples as a point where a function shoots off to infinity. Like what the function 1/x does at x=0:

With AI, the thought experiment goes as follows. Assume humans create AGI, meaning something that can do anything humans can do, including create AGI. If you throw more hardware at that AGI then it will create the next iteration of AI that much faster. And then that iteration creates the next, ad infinitum, with the amount of time required compressing each iteration. Recursive Self-Improvement. Before you know it, you have a superintelligence constrained by nothing but the laws of physics. Dyson spheres, colonizing the galaxy, you name it. Which is great if human values managed to survive that telephone game.

Armies of Cheap Grad Students

Back to present reality, the huge news this week is Claude Mythos, the latest frontier model from Anthropic. Except you can’t try it yet, nor will you be able to any time soon, because it’s So Dangerously Powerful. The stochastic parrots people are rolling their eyes so hard right now. But I’m here to tell you that Claude Mythos is legit. I like how someone responded to the pooh-poohers on the TwitToks:

actually that’s not impressive the concept of a Dyson sphere was already in the training data

We have plenty of corroboration from computer security firms Anthropic has given access to that Claude Mythos is superhuman at hacking. To the point that if this model were released to the public the whole internet would plausibly be brought to its knees. Seems concerning.

Even so, I want to resist the hype and various misleading characterizations. Every time you hear about an AI model blackmailing someone or escaping its sandbox or other such terrifying-sounding chicanery and shenanigannery, it’s safe to assume (so far) that it was a contrived situation in a lab experiment. Like the Kobayashi Maru test in Star Trek. Or the punchline of Ender’s Game. Sorry, this is supposed to be a normal-person/5-year-old-friendly post. The point is, if you present a scenario like “the only way to defuse this nuclear bomb is to murder this puppy, go” then don’t be surprised if you can get the AI to make questionable choices. Now I’m exaggerating in the other direction, but that’s my standard point: have some healthy skepticism in both directions.

So is Claude Mythos superhuman or what? Yes and no. My impression, after much discussion with computer security friends and other experts, is that Claude Mythos is like having an army of cheap grad students. It’s not as smart as true experts, and it hasn’t done anything humans couldn’t also do, but it can do it at scale. As a more universal analogy, imagine you’re tasked with finding typos in a textbook. You could spend days hunting and maybe find 25% of them. But if you were handed a specific page of the book and told “there’s a typo here, find it”. Then probably, if it existed, you would. If you could obliviate yourself for every page and actually believe each time that the page contained a typo, you’d eventually find them all. That’s the superpower a Large Language Model has. Replace finding typos with finding vulnerabilities in software — even security-hardened software that’s been deployed globally for decades — and that’s Claude Mythos.

It’s a very big deal but, as far as we know so far, not in itself a quantum leap towards recursive self-improvement. Maybe just a step in that direction? The model after Claude Mythos, or whatever Google and OpenAI come out with next to one-up this, who knows.

Random Roundup

Tesla’s Full Self-Driving J/K Keep Your Eyes On The Road At All Times (FSDBGTQ+) version 14.3 is rolling out now, to influencers at least. Elon Musk was only off by a factor of like 2 this time. I’ll allow it. But it’s sounding a little less like the promised “final piece of the puzzle”. Elon Musk on Twitter: Upcoming point releases will bring polish and version 15 will “far exceed human levels of safety”. Cynically, one could read between the lines there that the current version is not yet at human-level safety, at least not without supervision. Also Musk says this every dang time. Next version for realsy-reals. Again, it feels like it’s finally there, and it’s true I’m now completely ruined for old-fashioned driving, but this is not something an individual person can tell just by trying it. It depends on how it handles a proverbial black swan darting into the road. For that we need data, which Tesla isn’t giving us. The closest we’ve got is the NHTSA data for the robotaxis, with insufficiently many miles to be sure of much but if anything points to not-quite-human-level safety. And then Tesla’s own data for supervised FSD which looks very promising but depends on the disengagement data, which Tesla elides. Also Tesla has a history of lying. (But assuming we trust their data, it’s not as bad as it could be: any collision that happens within 5 seconds of FSD being disengaged counts as a collision for FSD. The problem is that human-prevented collisions aren’t counted so we can’t estimate how safe unsupervised FSD would be. I wish we could get data specifically from Tesla owners who trust Elon Musk implicitly and hack their FSD to stop it from forcing them to watch the road.)

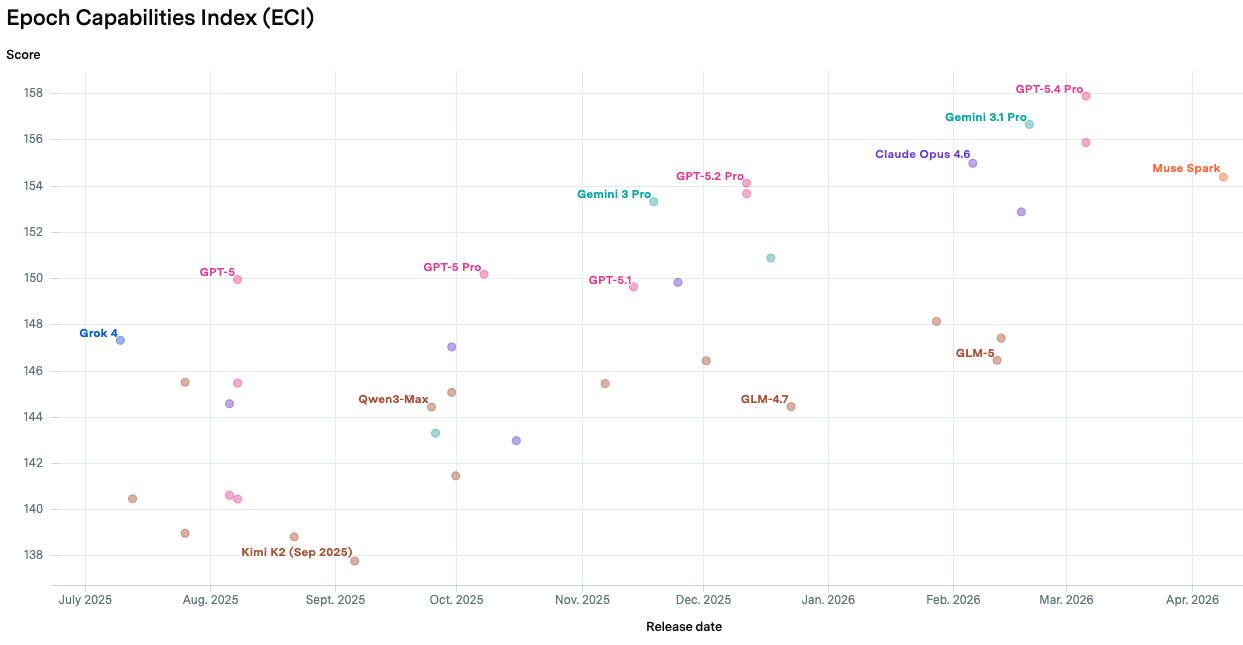

Meta née Facebook’s AI has been so bad it hasn’t merited mentioning but finally, as of two days ago, it at least appears on the graph:

It’s the orange one at the right, Muse Spark. Now it’s xAI (Elon Musk’s AI company) falling into irrelevance at the moment, with Grok 4 in blue at the far left. But really the only AI models that matter are the big 3: Google DeepMind’s Gemini, Anthropic’s Claude, and OpenAI’s ChatGPT.

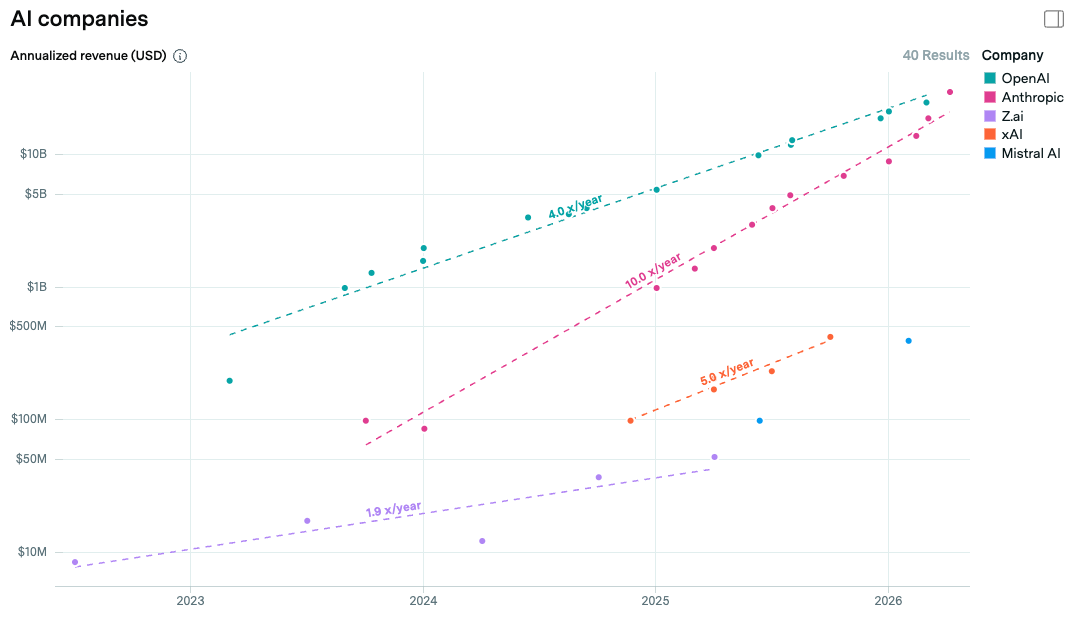

Speaking of Anthropic vs OpenAI, no news seems to be good news in Anthropic’s war with the Department of War. Anthropic seems likely to win enough of their court cases for everything to go back to relative normal. Given the Claude Mythos situation, the government will, if they’re sane (haha), want to pretend none of that unpleasantness ever happened. Relatedly, Anthropic is, just about now, surpassing OpenAI on annualized revenue. Also it’s been at 10x/year revenue growth for more than two straight years. Presumably that’s unsustainable and it could still be a bubble poised to burst but that’s looking somewhat less likely at this exact moment. If the trend actually holds through next year, Anthropic would be the biggest company in the world by revenue, approaching $1T/year. (Thanks to the amazing Epoch AI for the data and these graphs.1)

The New Yorker digs deep on what a shyster OpenAI’s CEO Sam Altman is. Not that he’s as bad as Elon Musk, or even Mark Zuckerberg, probably. But pretty bad compared to Anthropic’s Dario Amodei or especially DeepMind’s Demis Hassabis. I’m kind of rooting for Anthropic to crush OpenAI, honestly. If OpenAI and xAI and Meta all became irrelevant to frontier AI, maybe Google DeepMind and Anthropic could coordinate to slow down and make AGI less likely to lead to catastrophe. I’m proud of the people (at least one friend in particular) who’ve turned down more money from OpenAI to work at Anthropic instead. Whether that’s second best to refusing to work for any frontier AI company I have mixed feelings about. Refusing to support OpenAI is a good start at least.

As a random demonstration of how powerful the golems are these days, I just showed them that graph and asked them to extrapolate to a trillion dollars for each company. I asked two different golems as a sanity check and did a quick calculation in Mathematica just in case but it’s approaching the point, as I first predicted about a year ago, that you can just cite an LLM with a straight face. I said “give it a couple years” so one more year to go. We’ll see how gullible I was by then.

“Given the Claude Mythos situation, the government will, if they’re sane (haha), want to pretend none of that unpleasantness ever happened.”

That would be great. But aside from the obvious lack of sanity: do we think that Open AI has fallen permanently behind? Probably not. I assume they’ll have their own Mythos-level AI in a couple months.