Third World Bullshit

Anthropic fights the literal Department of War, guess who wins?

It’s politics week for AGI Friday. Breaking news is that Anthropic, the AI company that makes Claude.ai (my favorite model), got in a fight with the literal Department of War and the latter went nuclear. Figuratively.

Scott Alexander (from whom I also stole the title of this post) laid out the situation as it stood a couple days ago, and I’ll quote him for background:

Anthropic signed a contract with the Pentagon last summer. It originally said the Pentagon had to follow Anthropic’s Usage Policy like everyone else. In January, the Pentagon attempted to renegotiate, asking to ditch the Usage Policy and instead have Anthropic’s AIs available for “all lawful purposes”. Anthropic demurred, asking for a guarantee that their AIs would not be used for mass surveillance of American citizens or no-human-in-the-loop killbots. The Pentagon refused the guarantees, demanding that Anthropic accept the renegotiation unconditionally and threatening “consequences” if they refused.

The threatened consequences were (a) being forced to comply by invoking the Defense Production Act and (b) designating Anthropic a “supply chain risk”, banning all US companies with military contracts from using Anthropic.

Anthropic held their ground. Can you guess how the Trump administration took that?

First, as many are pointing out, those threats are completely contradictory. The Defense Production Act is for technology so critical to national security that the law forces companies to provide it. The “supply chain risk” designation is for technology from foreign adversaries that’s so threatening to national security that the law forbids many companies from using it. The “supply chain risk” designation is what I’m calling the nuclear option. It hasn’t even been used against Anthropic’s Chinese competitors. It’s certainly never been used against an American company.

record scratch

Until today. That’s right, it’s been invoked against Anthropic as pure retaliation for not caving on their red lines of no killbots and no mass domestic surveillance. Also Trump called Anthropic a “radical left, woke company” full of “Leftwing nut jobs”. They seem almost the opposite to me, from their statement.

Despite my previous AGI Friday, “Killer Robots Are Perrrrfectly Safe”, I’m 100% on Anthropic’s side. As are hundreds of Google and OpenAI employees. Even Sam Altman, who I generally find much less principled than anyone at Anthropic, is averring that OpenAI’s red lines are the same.

Even if you disagreed about killbots and government surveillance, or even if you buy Pete Hegseth’s framing that (let me see if I can say it with a straight face) this is Anthropic trying to “seize veto power over the operational decisions of the United States military”, there’s a bigger principle at play. Scott Alexander again:

The “supply chain risk” designation was intended as a defense against foreign spies, and it’s pathetic Third World bullshit to reconceive it as an instrument that lets the US government destroy any domestic company it wants, with no legal review, because they don’t like how contract negotiations are going.

I’m hoping this doesn’t hold up in court.

PS: Anthropic, in their very latest statement, questions how broad the “supply chain risk” designation actually is. Fingers crossed that that’s correct and that ultimately taking this stand helps Anthropic more than it hurts them. I certainly recommend Claude.ai and Claude Code and Claude Cowork more than ever.

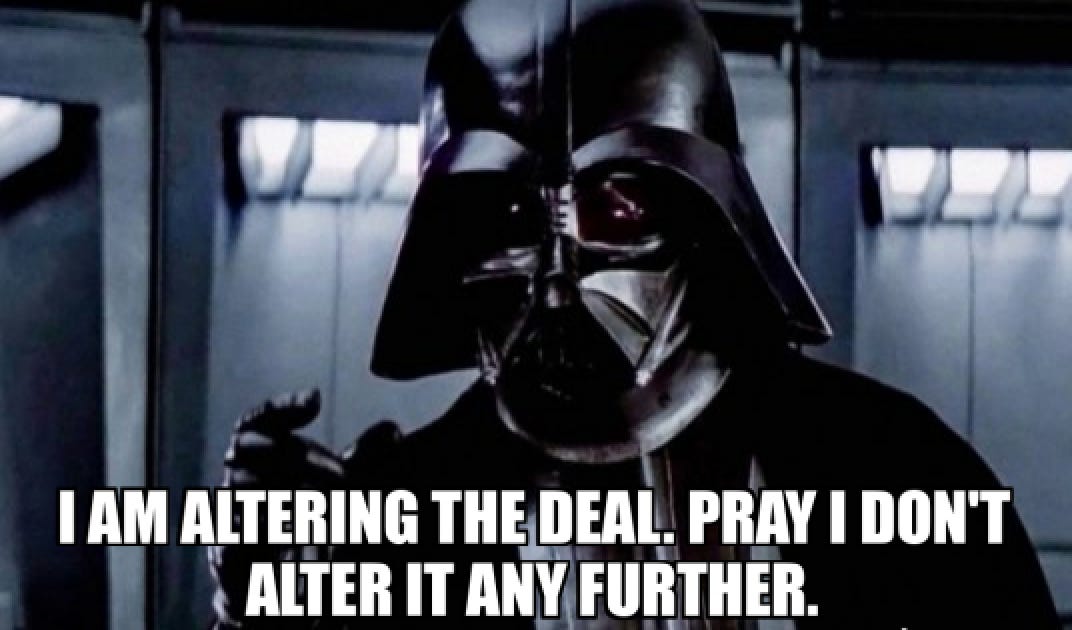

PPS: Apparently the Pentagon has agreed in principle to a contract with OpenAI using the same red lines — no killbots, no mass domestic surveillance. Someone needs to send this Darth Vader meme to Sam Altman.

UPDATE the next day: It seems I gave Altman too much credit. See latest discussion in the comments. Huge thanks to William Ehlhardt for helping me unpack Altman’s elaborate equivocating.

Random Roundup

If you all can stand another political post any time soon, I have a lot to say about an article claiming that the political left is missing out on AI. Suffice to say that it’s pretty ironic that Trump and Hegseth are saying Anthropic and CEO Dario Amodei are all radical leftists.

Waymo hit 200 million autonomous miles this week and added Dallas, Houston, San Antonio, and Orlando to their list of cities — now 10 in total. I’ve continued to improve and polish the tool I made to assess how safe Waymos vs Tesla robotaxis vs Zooxes vs humans are. Tesla is up to around 840k miles if we believe crowdsourced estimates, with little growth in recent months and not enough data to say much about how safe the full self-driving is when unsupervised. It seems to be somewhat worse than humans so far. In California, Tesla has humans in the driver's seat and they haven't applied for the permits to remove them. I believe that's because California requires more transparency than Tesla's willing to be subjected to at this early stage. Tesla’s vision-only self-driving, impressive as it when tested over thousands of miles by individual people, is not yet safe enough, unsupervised, to scale up.

The “Will Smith eating spaghetti” benchmark shows off the progress in AI video generation over the past three years.

A mathematician who’s been skeptical of AI hype now says AI is on track to have a bigger impact on mathematics research than the computer. See also IEEE Spectrum on math benchmarks.

Update on that Sam Altman note in your post

"OpenAI CEO Sam Altman said on Friday it has reached an agreement with the U.S. Department of War to deploy its AI models on classified cloud networks."

https://www.msn.com/en-us/news/politics/openai-reaches-deal-to-deploy-ai-models-on-us-department-of-war-classified-network/ar-AA1XeEiw

Contrary to OpenAI's spin, their new contract with the DoW does not have the "same red lines" as Anthropic wanted. If you read the relevant contract sections in OpenAI's own post ( https://openai.com/index/our-agreement-with-the-department-of-war/#:~:text=The%20Department%20of%20War%20may,Act%20and%20other%20applicable%20law. ) you will find it is simply "all lawful use" but with more words.