Are Tesla robotaxis safer than humans yet?

No but if you want to believe...

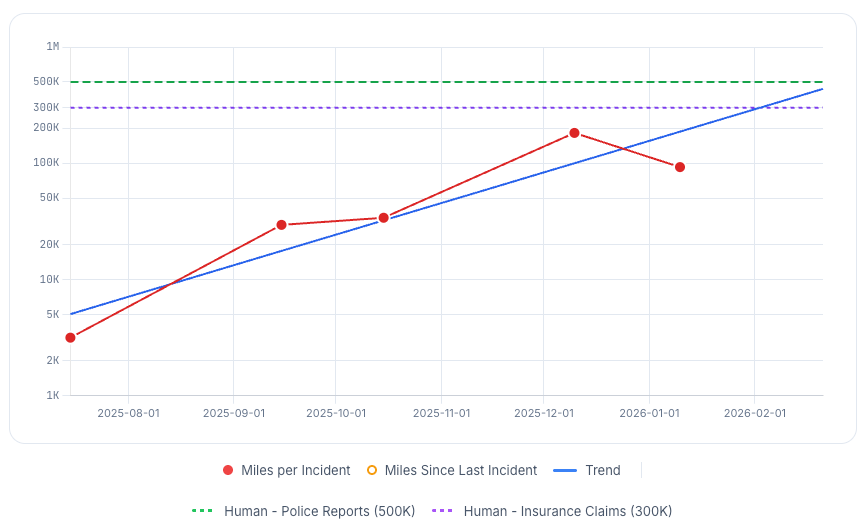

I swear I’ll let this go soon but we got new crash data from the National Highway Traffic Safety Administration (NHTSA) and it is just ridiculous how the Tesla haters jump all over it to say it’s worse than we thought and the Tesla fans show us graphs like the following, seemingly showing that Tesla robotaxis and their soi-disant Full Self-Driving are mere months from human-level safety.

Neither side is being epistemically virtuous so here I am again to set the record straight. The biggest problem with the above graph is that it seems to just not have all the latest NHTSA data for January. Here’s what the latest NHTSA data tells us:

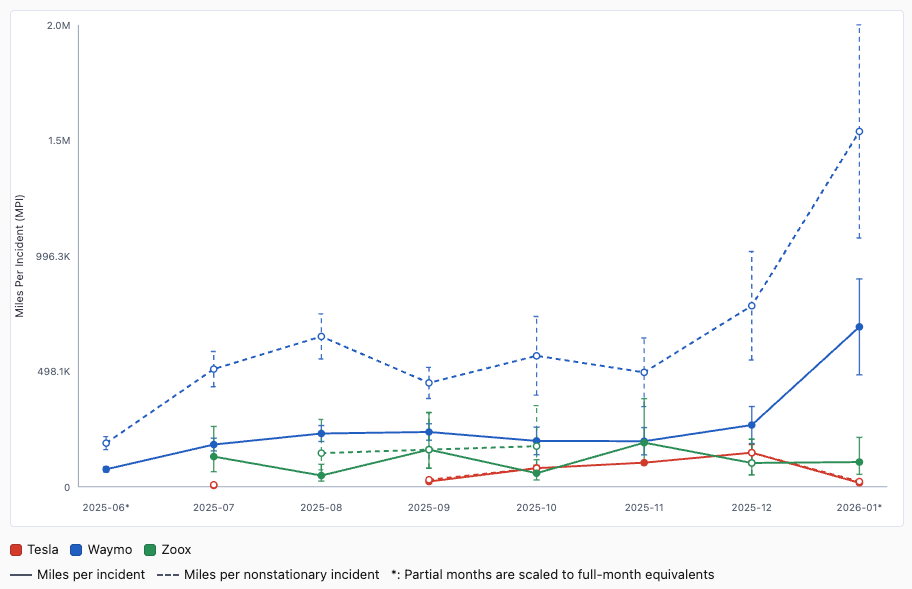

That’s Waymo in blue starting superhumanly safe (see discussion last week for why it’s wrong to treat humans as crashing only every 300k or 500k miles in this context) and shooting beyond a million miles per incident recently. Mostly the answer for both Tesla and Zoox is that we don’t have enough data to be sure of anything. Taking the data at face value, the number of miles a Tesla robotaxi can between crashes just fell off a cliff. Figuratively speaking; almost all of these incidents are at worst minor fender benders. So if you want to believe that Tesla is about to figure out vision-only self-driving for real, this shouldn’t really dissuade you.

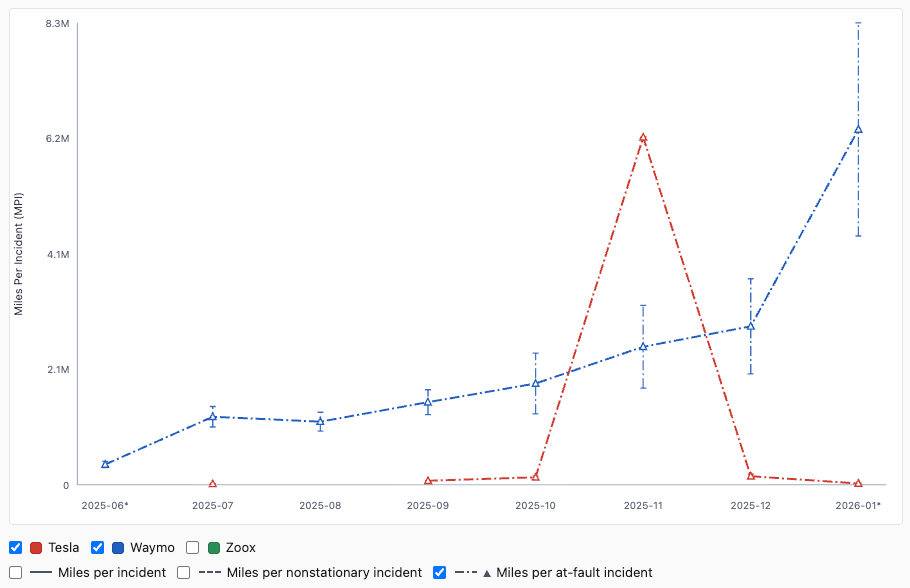

If we try to estimate just incidents where the robotaxi is at fault, and if we trust AI-generated estimates1 of such, we get something like this:

Which makes it look like Tesla leapt above Waymo in November. In reality it’s just that Tesla happened to only log one incident that month and it seems to have not been Tesla’s fault. Zoox also has so little data that it’s all over the place, so I omitted that. Of course Waymo has tons of data (and are transparent about all their incidents) so that estimate of 4-8 million miles between incidents that Waymo is at fault for is probably about right.

If you want to browse these “incidents” and play with the data more, the golems2 and I made a little website.

Data aside, Tesla robotaxis continue to look ever more impressive in demos, so I’m still not totally disbelieving that they could be close to cracking this. But unsupervised vision-only (as opposed to with cameras + lidar + radar) self-driving remains very much unproven. See Musk vs McGurk for more on how wrongheaded Musk’s anti-lidar stance is.

I should also reemphasize the insane amount of assumptions we have to make here. I’ve scrutinized the relevant page of Tesla’s Q4 report and they leave it completely ambiguous whether the mileages include supervised rides like the ones in California or the subset that are supervised in Austin. I’m giving Tesla every possible benefit of the doubt, despite that being extremely undeserved, to get an upper bound on what Tesla bulls can reasonably choose to believe. Here are things we can glean from the official Tesla Q4 report:

Testing of driverless robotaxis began in December 2025, suggesting that earlier rides didn’t count as driverless. But that’s puzzling because Tesla has been been reporting incidents to NHTSA since June 2025 for which they officially report that the car had no driver/operator, remote or otherwise.

Tesla calls the California program a ride-hailing service, avoiding calling those cars robotaxis explicitly.

They show a cumulative plot of robotaxi miles, presumably because a more standard miles per month plot would look embarrassingly flat for recent months.

I have more details and links to all the raw data at dreeves.github.io/crashla.

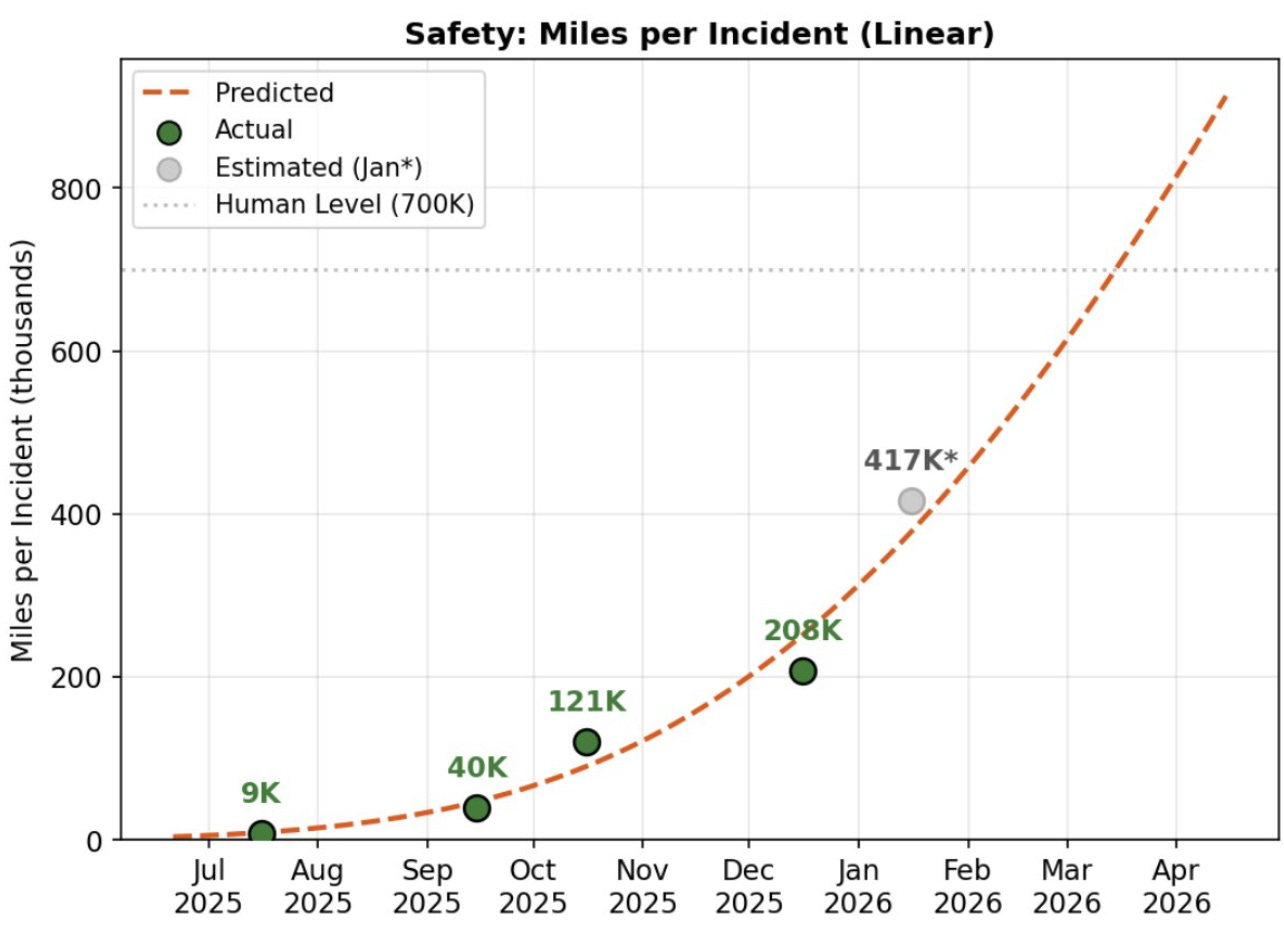

UPDATE 2026-02-21: Here’s another example of a graph that prominent people in the Tesla community are showing off:

Sawyer Merritt tweeted that, saying “@raines1220, who has a PhD in Computer Science, predicts Tesla’s Robotaxi fleet in Austin will fully surpass human drivers by the end of Q1 2026.” If it’s enough to believe someone who has a PhD in Computer Science then I’m qualified to tell you that the chart above is full of errors and the dotted line is wishful thinking. But, again, the real story is we can’t infer much from any of this data. The error bars are miles high. But my prediction is that the end of Q1 will not see a big improvement in Tesla’s miles-per-incident. Certainly nothing close to Waymo’s.

The AI-generated estimates match each other pretty well — I had all three of Claude Opus 4.6, GPT-5.3-Codex, and Gemini 3.1 Pro give their judgments — and also matched fairly well my own human judgment for the 9 Tesla incidents I talked about last week.

My friend and colleague Clive Freeman says he’s trying to persuade people that “golem” is the right word for LLMs. “Human made machines that follow the instructions we write on a tablet in their head, plus go-LLM/golem.” So good.