The AGI Debate Only Seems Polarized

Even Gary Marcus admits 4 years to AGI is not impossible

(Prescript: Jump to the Random Roundup at the end if your fingers are tired from hanging on the cliff I left you on last week.)

This week everyone is talking about the new book, If Anyone Builds It, Everyone Dies, coming out later this week. Three of my favorite bloggers have reviewed it already. Scott Aaronson’s review, as I mentioned previously, says that “even if one still isn’t ready to swallow the full package of Yudkowskyan beliefs, any empirically minded person ought to be updating in its direction.” Scott Alexander’s review has plenty of quibbles, but Scott says he recommends it for ordinary people as a worthy introduction to the subject. (Also Scott’s review manages to be persuasive and poignant and funny in its own right.) Zvi Mowshowitz’s review dissects many of Yudkowsky and Soares’s arguments in detail, in particular their analogies with human evolution. (And, oops, just as I’m going to press, Zvi seems to have removed his review. I’m guessing he meant to time it with the official launch of the book. UPDATE: Confirmed, link added.)

None of them fully agree with the authors of the book that if anyone builds superintelligence, everyone on earth necessarily dies, but all of them take the fear seriously and assign it enough probability to treat it as humanity’s current most pressing issue, despite plenty of competition!

In the other corner, we have the skeptics. Gary Marcus opined in the New York Times a week or so ago that “the fever dream of imminent superintelligence is finally breaking”. He ridicules GPT-5 in particular (see the roundup below for more on this) and the AI industry generally. (UPDATE: As discussed in the AGI Friday a week after this one, Gary Marcus’s opinions turn out to have massive overlap with the authors of If Anyone Builds It, Everyone Dies!)

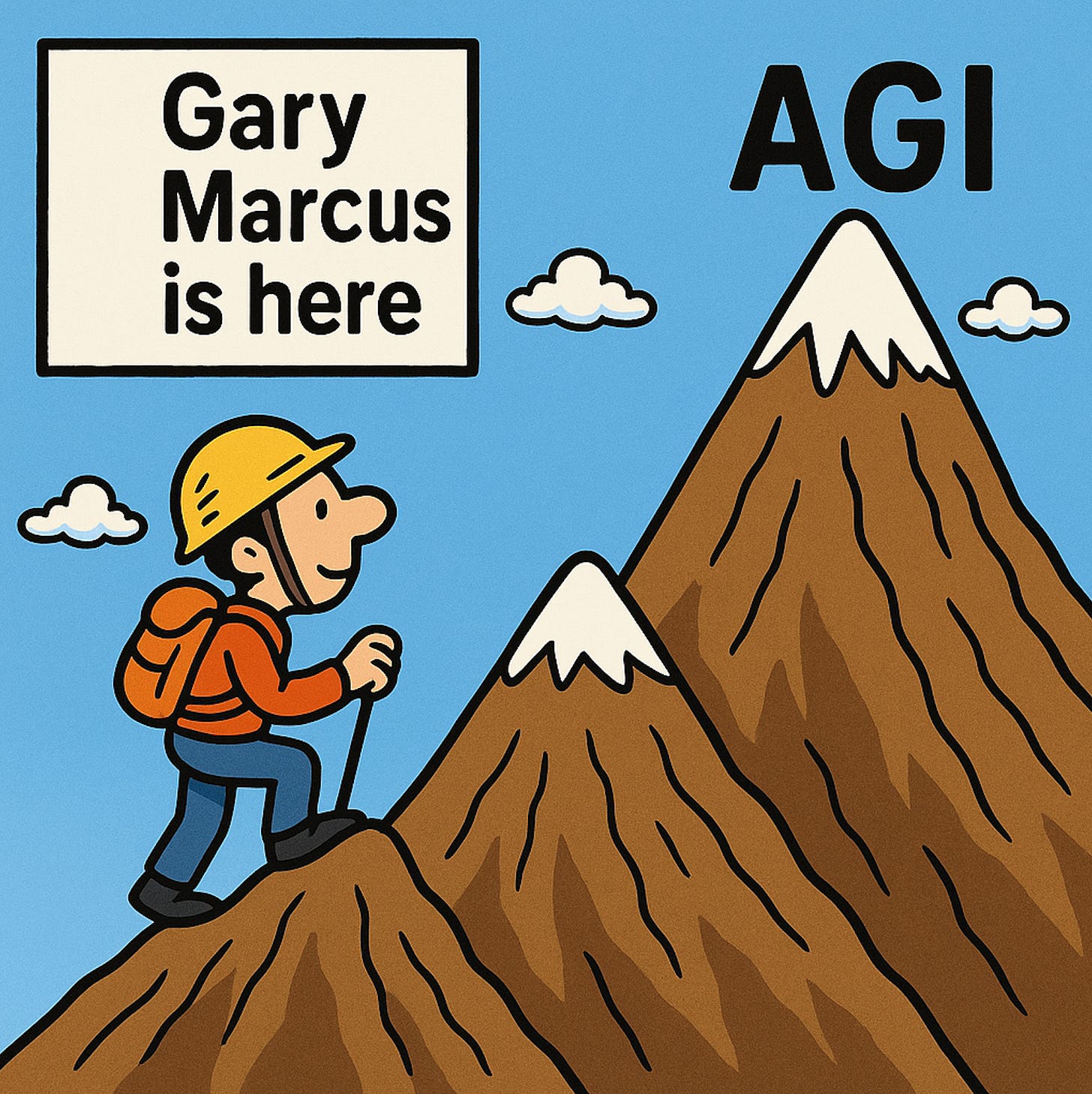

But contrary to the impression you get from that piece, it turns out Gary Marcus thinks AGI is coming in 10-25 years. Ish, he hastens to add. He’s keen to point out that it could take longer (true fact). But, when pressed, he admits that less than 10 years is also possible. He calls 4 years “awfully unlikely”.

Marcus is shockingly good at goalpost moving. As I talked about in one of the first AGI Fridays, he made his whole brand “deep learning is hitting a wall” back before LLMs and the whole generative AI era. He points this out proudly, with sing-songy told-you-sos.

My old Messy Matters coblogger, Sharad Goel, now teaching about AI at Harvard, has a point estimate of 15 years to AGI, close to the middle of Marcus’s range. So, roughly speaking, Goel is suggesting an equal chance of AGI earlier or later than that. As always, the confidence intervals are wide. It’s like my earthquake analogy. AGI will be here “any decade now”. Possibly this decade.

What I'll begrudgingly give Gary Marcus credit for, if it turns out to be true, and which I agree has gotten more likely lately, is the prediction that AGI will not pop out purely from scaling up existing models. That has gotten us shockingly far (Gary Marcus has been wildly wrong about how far) but there are weak indications that we may, finally, hit something of a wall soon. Or not. If we do, Gary Marcus is going to be insufferable about it.

But ultimately all he’s saying, despite being the flag bearer of the anti-doomers, is that AGI is a few more algorithmic breakthroughs away. It seems that 10-25 years (ish) is about as bearish as the bears get. 30 at the most. My own opinion is that anything from 3 to 30 is on the table.

Random Roundup

Machines win the Man vs Machine Hackathon! The winning entry was a “code review tool with heat map for AI generated code”.

On to my next told-you-so, though this one doesn’t count since I don’t think I commented publicly at the time. Two years ago when Scott Alexander wrote about how bearish superforecasters were on AI, I was nervous that it was evidence I was being taken in by the hype. I’m now relieved to report that the superforecasters were, almost surely, just wrong. As one example (not that any one example proves anything) they gave a 2.3% probability for AI getting gold on the International Math Olympiad by 2025. Recall from a previous AGI Friday that that happened in July.

There are hundreds of comments on my “Does GPT-5-Thinking make egregious errors?” prediction market on Manifold. Ultimately the answer is yes, but boy do you have to work to find (reliably bad) examples. I guess that was already true with o3.

Random observation: The spikiness of LLMs is so extreme and drives so much of the polarization in these debates. Whether you think LLMs are amazing or you think they’re garbage, it’s so easy to collect anecdotes to support your case. (Well, with a single prompt it’s not easy, but the examples are out there; see previous bullet item.) LLMs seem to oscillate between supergenius and sophistic sycophantic supermoron. I don’t blame people being put off by them but these tools have reached the point that they’re so useful it’s crazy not to use them.