GPT-5 Can Just Barely Play Math Games With Bad Drawings

That may not count as "useful" but give it another 4 months

As of last week, I and everyone I know had pretty much concluded that “agent mode”, where a chatbot uses its own web browser to do things for you on the internet, was still worse than useless. I’m not exactly going to contradict that, but I now think we’re on track for this prediction, from 5 months ago, coming true in time:

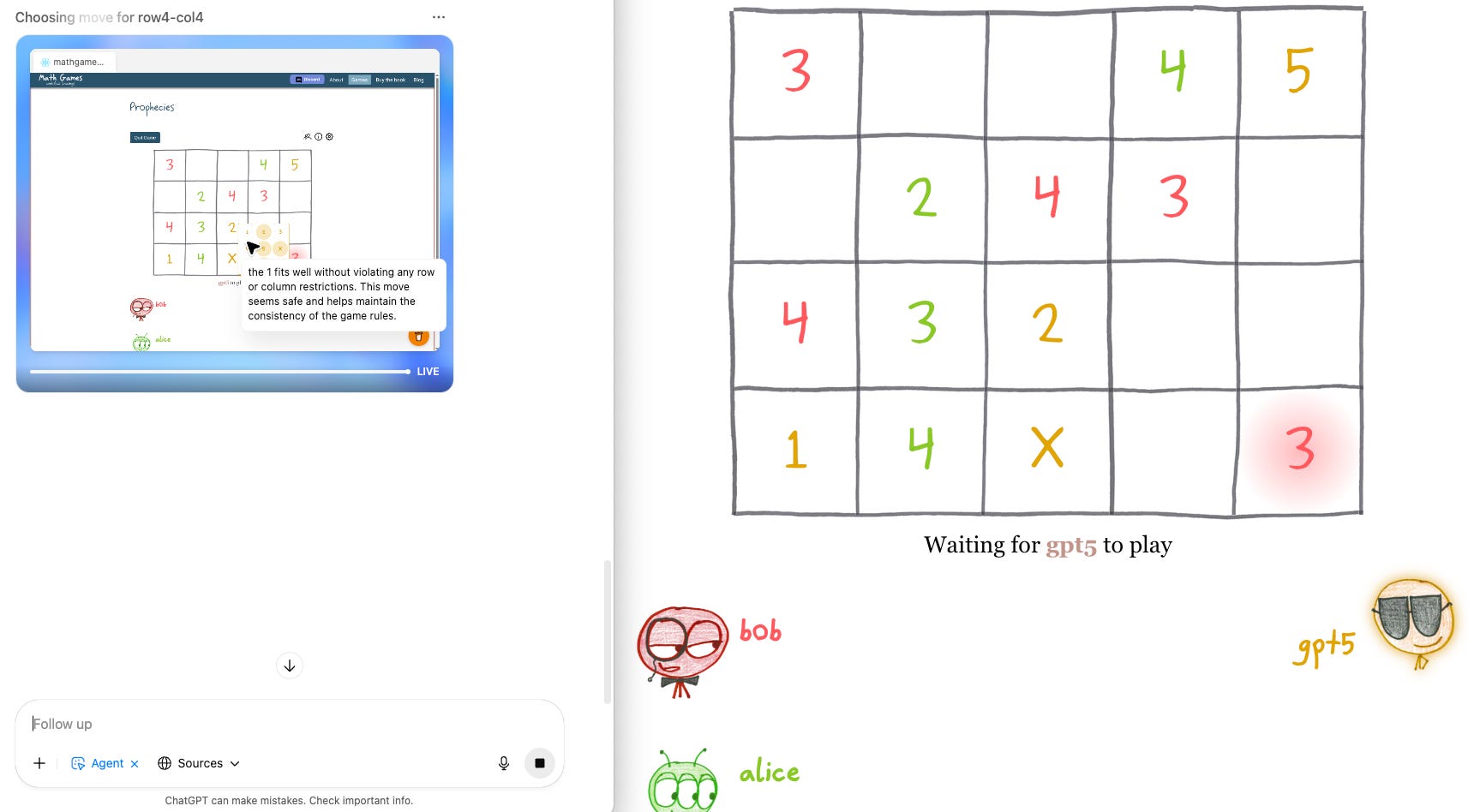

I’m a big fan of Ben Orlin’s Math Games with Bad Drawings. Several of the games in the book can be played online, so I thought I’d see how GPT-5 in agent mode fared at that. I’d previously tried various pragmatic tasks like reserving a rental car and filling out tax forms and it’s mostly fallen on its face so I didn’t have high hopes here. But, behold, it basically worked. You can even watch it thinking to itself as it decides what move to make as it clicks around.

One thing it can’t do is pay attention and notice when it’s its turn. Frustratingly, it kept lying about this, promising to pay close attention, and blaming network lag, until finally I cornered it into admitting that it has no ability to watch for changes on a website. But, ok, it wasn’t a big deal to type “your turn” each time.

Another thing it can’t do is play the game remotely strategically. I played the roles of both Bob and Alice in this sample game and crushed it with minimal effort (and I’ve only played this game a handful times and didn’t do particularly well against the friends and family I played with).

But, still. This is serious progress. Let’s not be like the person in the classic joke about the talking horse or chess-playing dog. It’s plenty gobsmacking that it can do this at all.

Or maybe it won’t get beyond navigating simple game websites anytime soon. My Manifold market on this question is, as of this writing, at 43% probability that agent mode gets useful by the end of 2025. I’m now going to say it has better than even odds.

Random Roundup

You voted overwhelmingly in last week’s poll that you like these roundups.

As you might expect from a field in the midst of an influx of trillions of dollars of investment, there’s a firehose of news and upgrades almost every week. It’s a little overwhelming and I think part of the point of AGI Friday is to help you navigate the overwhelm. So I’m trying to be ruthless in culling my notes on news items. But if there’s something you’ve seen in the news, please ask me.

A couple commenters on the last two AGI Fridays (in particular Matt Rudary and Nathan Arthur) changed my mind a bit on just how conceivable LLM consciousness is. Not that I think the time has come to take the LLM consciousness seriously, just that the barriers to it are lower than I was thinking they were.

Also a correction, also thanks to Matt Rudary, who has an extremely elucidating comment on it: AI is not yet superhuman at Bridge!

A guest video on the 3blue1brown YouTube channel has a brilliant exposition of how AI was able to do so well in the International Math Olympiad. I think it’s looking steadily more plausible that superhuman math (without AGI) is coming this decade.

In case you didn’t think my previous reporting that AI had extended the frontier of new math counted, we have a new credible claim that GPT-5-Pro can prove new, interesting mathematics.

Rob Miles’s latest video on AI safety eloquently and amusingly makes the point that, even if you’re otherwise so hardcore pro-technology (which Miles is) that you’re in favor of massive deregulation and acceleration of pretty much every other technology, AGI is different.

Scott Alexander injects a big dose of sanity into the debate on AI Psychosis. Subtitle: “Folie a deux ex machina”.

“Folie a deux ex machina” is, of course, brilliant.

I’m half convinced that Scott Alexander had kids just so he could use “In the long run we’re all dad.”

If those games are in a published book, ChatGPT was probably trained on the games or at least training on online discussion of the games and solutions. So this experiment doesn't say much about its ability to solve problems when the solutions are not in its training data.